Can this hand-eye coordination model allow robots to perform processing while moving?

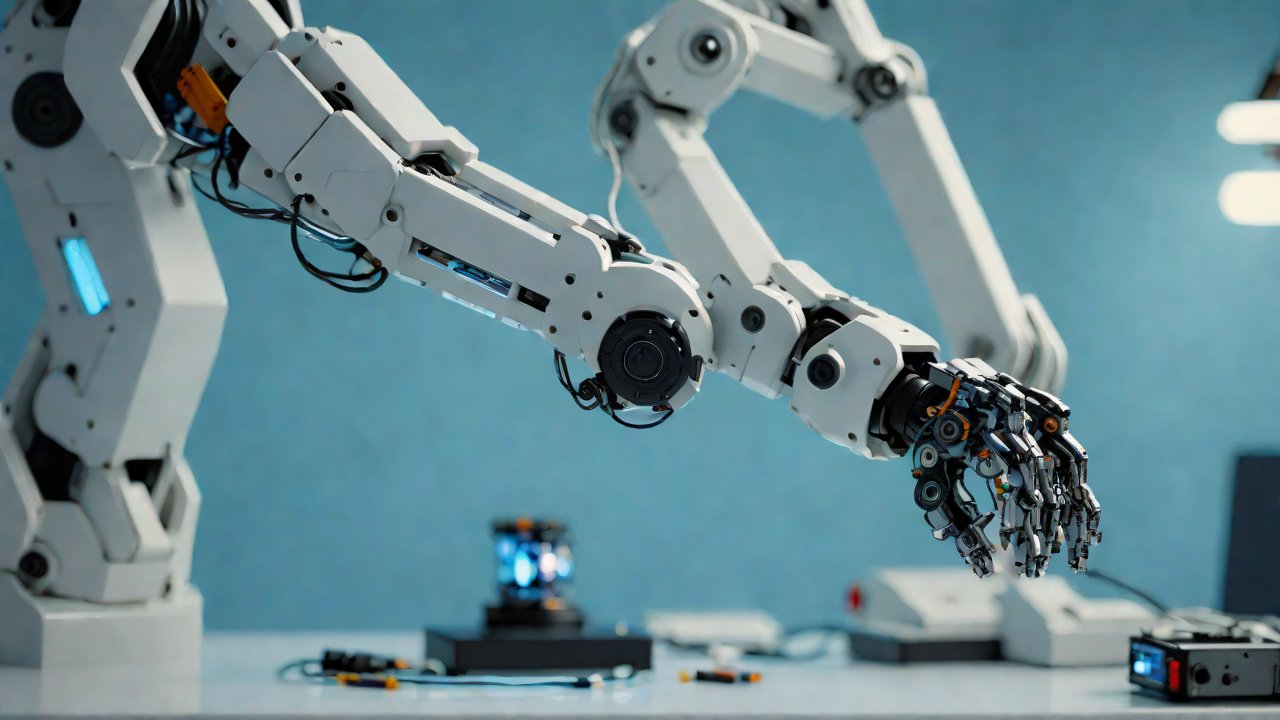

As we navigate the uncharted territories of Artificial General Intelligence (AGI) and robotics, a pressing question arises: can a hand-eye coordination model be leveraged to enable robots to process information while in motion? This inquiry delves into the intricate dance between perception, cognition, and motor control, where the boundaries between humans and machines begin to blur. A profound understanding of this synergy is crucial for unlocking the next generation of intelligent robotic systems.

1. Background and Context

The concept of hand-eye coordination has long been a cornerstone of robotics research, with applications ranging from assembly line manufacturing to surgical procedures. However, as robots become increasingly sophisticated, the need for integrated processing capabilities while moving becomes more pressing. This report will explore the feasibility of leveraging hand-eye coordination models to enable real-time processing in mobile robots.

1.1 Market Overview

The global robotics market is projected to reach $153.54 billion by 2026, with a compound annual growth rate (CAGR) of 13.4% from 2020 to 2026 [1]. The demand for intelligent and autonomous systems is driving innovation in areas such as:

- Autonomous Vehicles: Companies like Waymo and Tesla are pushing the boundaries of self-driving technology.

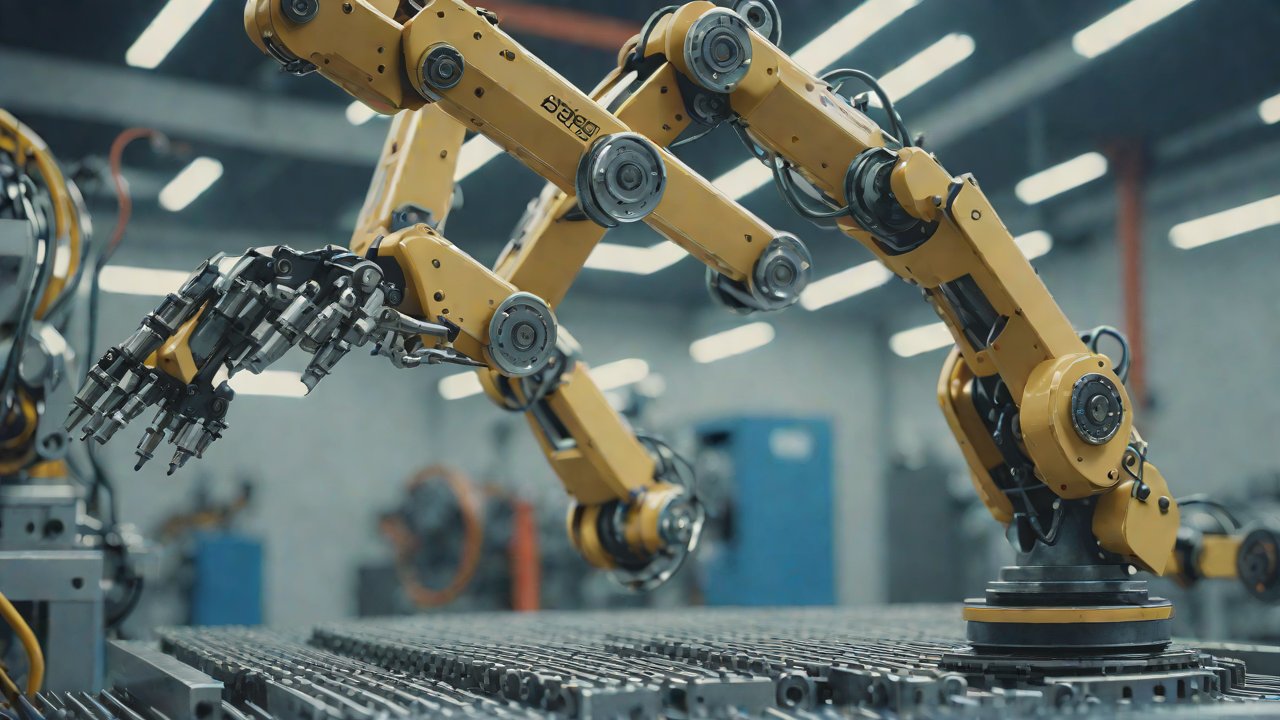

- Industrial Automation: Robotics Process Automation (RPA) is becoming increasingly prevalent in manufacturing and logistics.

1.2 Technical Perspective

From a technical standpoint, hand-eye coordination models rely on the integration of sensory inputs (e.g., visual, auditory) with motor control systems. This synergy enables robots to perceive their environment, make decisions, and execute actions in real-time. However, current implementations often require robots to come to a complete stop or pause processing while moving.

2. Hand-Eye Coordination Models

To address the question at hand, we must first explore existing hand-eye coordination models and their limitations. The following sections outline key architectures and their characteristics:

2.1 Model-Based Approaches

Model-based approaches rely on explicit representations of the robot’s environment and task objectives. These models can be divided into two categories:

| Category | Description |

|---|---|

| Geometric Models | Representations based on geometric primitives (e.g., points, lines, planes) |

| Kinematic Models | Descriptions of the robot’s kinematics and dynamics |

2.2 Learning-Based Approaches

Learning-based approaches focus on developing models that can learn from experience and adapt to new situations. Key techniques include:

| Technique | Description |

|---|---|

| Deep Learning | Neural networks capable of learning complex patterns and relationships |

| Reinforcement Learning | Agents learn through trial and error, receiving rewards for desired behavior |

3. Enabling Real-Time Processing

To enable real-time processing in mobile robots, several challenges must be addressed:

3.1 Sensory-Motor Integration

The integration of sensory inputs with motor control systems is crucial for hand-eye coordination models. This involves developing algorithms that can efficiently process and fuse multiple sensor modalities (e.g., visual, auditory) while maintaining real-time performance.

3.2 Processing Architectures

Designing processing architectures that can handle the increased computational demands of real-time processing is essential. This may involve:

- Distributed Processing: Leveraging multiple processing units or cores to handle tasks in parallel.

- Specialized Hardware: Utilizing specialized hardware (e.g., GPUs, FPGAs) for accelerated processing.

4. Case Studies and Applications

Several case studies and applications demonstrate the potential of hand-eye coordination models in enabling real-time processing:

4.1 Autonomous Vehicles

Companies like Waymo and Tesla are pushing the boundaries of self-driving technology. Their systems rely on sophisticated hand-eye coordination models that integrate sensory inputs with motor control systems to enable real-time decision-making.

4.2 Industrial Automation

Robotics Process Automation (RPA) is becoming increasingly prevalent in manufacturing and logistics. Hand-eye coordination models can be leveraged to improve the accuracy and efficiency of robotic tasks, such as assembly line production.

5. Conclusion

In conclusion, hand-eye coordination models hold significant promise for enabling real-time processing in mobile robots. By addressing the challenges outlined above, researchers and developers can unlock new applications and industries for intelligent robotic systems. As we continue to push the boundaries of AGI and robotics, this synergy between perception, cognition, and motor control will remain a vital area of research.

[1] MarketsandMarkets. (2020). Robotics Market by Type, Component, Industry Vertical, and Geography – Global Forecast to 2026.

IOT Cloud Platform

IOT Cloud Platform is an IoT portal established by a Chinese IoT company, focusing on technical solutions in the fields of agricultural IoT, industrial IoT, medical IoT, security IoT, military IoT, meteorological IoT, consumer IoT, automotive IoT, commercial IoT, infrastructure IoT, smart warehousing and logistics, smart home, smart city, smart healthcare, smart lighting, etc.

The IoT Cloud Platform blog is a top IoT technology stack, providing technical knowledge on IoT, robotics, artificial intelligence (generative artificial intelligence AIGC), edge computing, AR/VR, cloud computing, quantum computing, blockchain, smart surveillance cameras, drones, RFID tags, gateways, GPS, 3D printing, 4D printing, autonomous driving, etc.

Note: This article was professionally generated with the assistance of AIGC and has been fact-checked and manually corrected by IoT expert editor IoTCloudPlatForm.