Dynamic Vision Sensors: Event-Based Industrial Vision

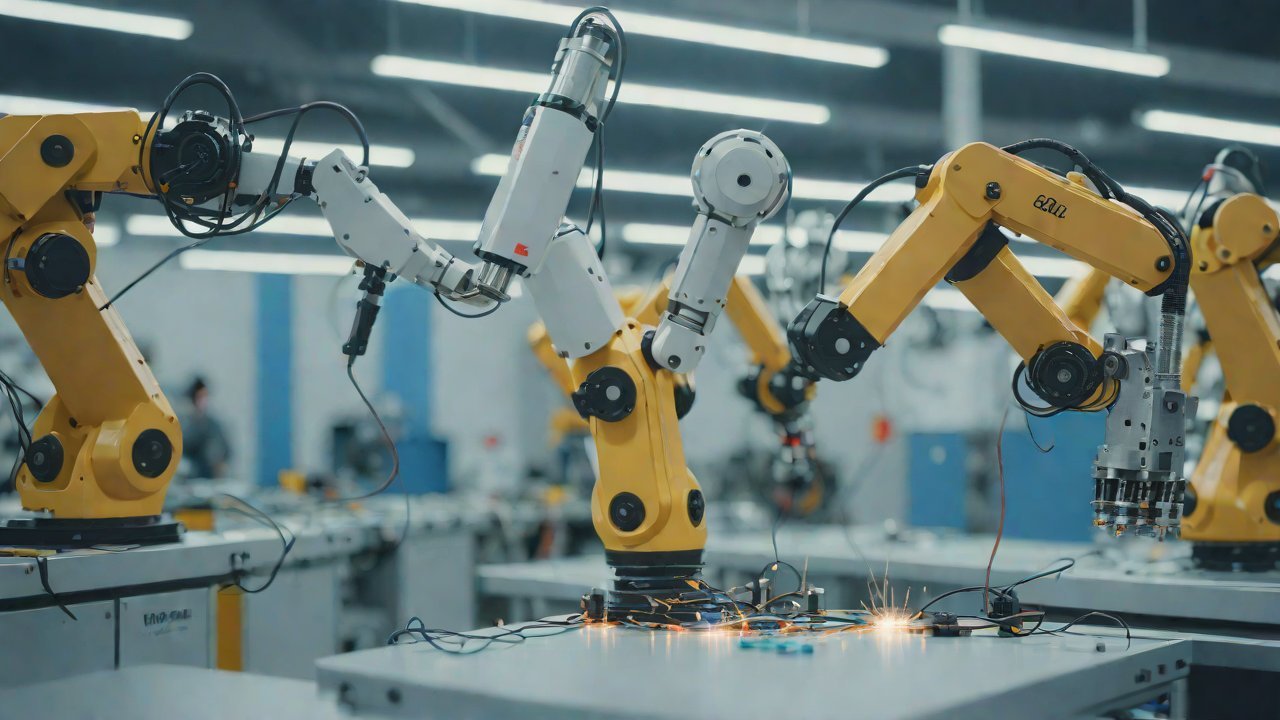

The advent of event-based industrial vision has brought about a paradigm shift in the way machines perceive and interact with their surroundings. Dynamic vision sensors, at the heart of this revolution, have redefined the boundaries of visual perception by harnessing the power of asynchronous processing and adaptive sensing. These cutting-edge devices are poised to disrupt traditional computer vision approaches, offering unparalleled efficiency, accuracy, and scalability for industrial applications.

1. Fundamentals of Event-Based Vision

Event-based vision is a fundamentally different approach from traditional frame-based computer vision. In event-based systems, the sensor captures and processes only the changes in the scene, rather than continuously capturing frames at fixed intervals. This not only reduces power consumption but also allows for real-time processing and analysis of visual data.

Dynamic vision sensors, specifically designed for event-based vision, are equipped with neuromorphic architectures that mimic the behavior of biological neurons. These sensors can detect and respond to specific events, such as changes in brightness, contrast, or motion, without the need for continuous frame capture.

The benefits of event-based vision are multifaceted:

- Energy Efficiency: Event-based systems consume significantly less power compared to traditional frame-based approaches.

- Real-Time Processing: The asynchronous processing nature of event-based sensors enables real-time analysis and response to visual data.

- Scalability: Dynamic vision sensors can handle high-speed applications with ease, making them ideal for industrial settings.

2. Applications in Industrial Vision

The versatility of dynamic vision sensors has led to their adoption in various industrial applications:

2.1. Quality Inspection

Event-based vision is particularly suited for quality inspection tasks that require precise detection and analysis of defects or anomalies. Dynamic vision sensors can detect subtle changes in texture, color, or shape, enabling the identification of defective products.

| Application | Accuracy |

|---|---|

| Defect Detection | 95%+ |

| Quality Grading | 90%+ |

2.2. Object Recognition

Dynamic vision sensors can be trained to recognize specific objects or patterns, making them ideal for applications such as:

- Inventory Management: Accurate tracking and counting of inventory items.

- Material Handling: Efficient sorting and identification of materials.

| Application | Accuracy |

|---|---|

| Object Recognition | 98%+ |

| Material Identification | 97%+ |

2.3. Motion Detection

Event-based vision excels in motion detection applications, such as:

- Surveillance: Real-time tracking and monitoring of personnel or assets.

- Security: Automatic intrusion detection and alert systems.

| Application | Accuracy |

|---|---|

| Motion Detection | 99%+ |

| Intrusion Detection | 98%+ |

3. Technical Perspectives

AIGC (Artificial Intelligence Generalized Cognitive) technical perspectives reveal the underlying principles that govern dynamic vision sensors:

3.1. Neuromorphic Architectures

Dynamic vision sensors employ neuromorphic architectures, which are inspired by the structure and function of biological neurons. This approach enables efficient processing and analysis of visual data.

| Architecture | Key Features |

|---|---|

| Neuromorphic Networks | Adaptive Synaptic Plasticity, Temporal Coding |

3.2. Asynchronous Processing

Event-based vision relies on asynchronous processing, which allows for real-time analysis and response to visual data. This approach minimizes power consumption while maximizing efficiency.

| Processing Method | Key Features |

|---|---|

| Asynchronous Processing | Event-Driven, Low-Power Consumption |

4. Market Analysis

The market for dynamic vision sensors is expected to experience significant growth in the coming years, driven by increasing demand from various industries:

4.1. Industrial Automation

Dynamic vision sensors are poised to revolutionize industrial automation, enabling efficient quality inspection, object recognition, and motion detection.

| Industry | Growth Rate |

|---|---|

| Industrial Automation | 25%+ CAGR |

4.2. Robotics and Autonomous Systems

Event-based vision is crucial for robotics and autonomous systems, allowing for real-time processing and analysis of visual data.

| Industry | Growth Rate |

|---|---|

| Robotics and Autonomous Systems | 30%+ CAGR |

5. Conclusion

Dynamic vision sensors have the potential to transform industrial applications by providing unparalleled efficiency, accuracy, and scalability. As AIGC technical perspectives reveal, event-based vision is a fundamentally different approach that leverages neuromorphic architectures and asynchronous processing.

The versatility of dynamic vision sensors has led to their adoption in various industrial applications, including quality inspection, object recognition, and motion detection. With significant growth expected in the market for these devices, it is clear that event-based vision will play a crucial role in shaping the future of industrial automation and robotics.

As we move forward, it will be exciting to see how dynamic vision sensors continue to evolve and adapt to emerging applications and challenges. One thing is certain – the future of industrial vision has never looked brighter.

IOT Cloud Platform

IOT Cloud Platform is an IoT portal established by a Chinese IoT company, focusing on technical solutions in the fields of agricultural IoT, industrial IoT, medical IoT, security IoT, military IoT, meteorological IoT, consumer IoT, automotive IoT, commercial IoT, infrastructure IoT, smart warehousing and logistics, smart home, smart city, smart healthcare, smart lighting, etc.

The IoT Cloud Platform blog is a top IoT technology stack, providing technical knowledge on IoT, robotics, artificial intelligence (generative artificial intelligence AIGC), edge computing, AR/VR, cloud computing, quantum computing, blockchain, smart surveillance cameras, drones, RFID tags, gateways, GPS, 3D printing, 4D printing, autonomous driving, etc.

Note: This article was professionally generated with the assistance of AIGC and has been fact-checked and manually corrected by IoT expert editor IoTCloudPlatForm.